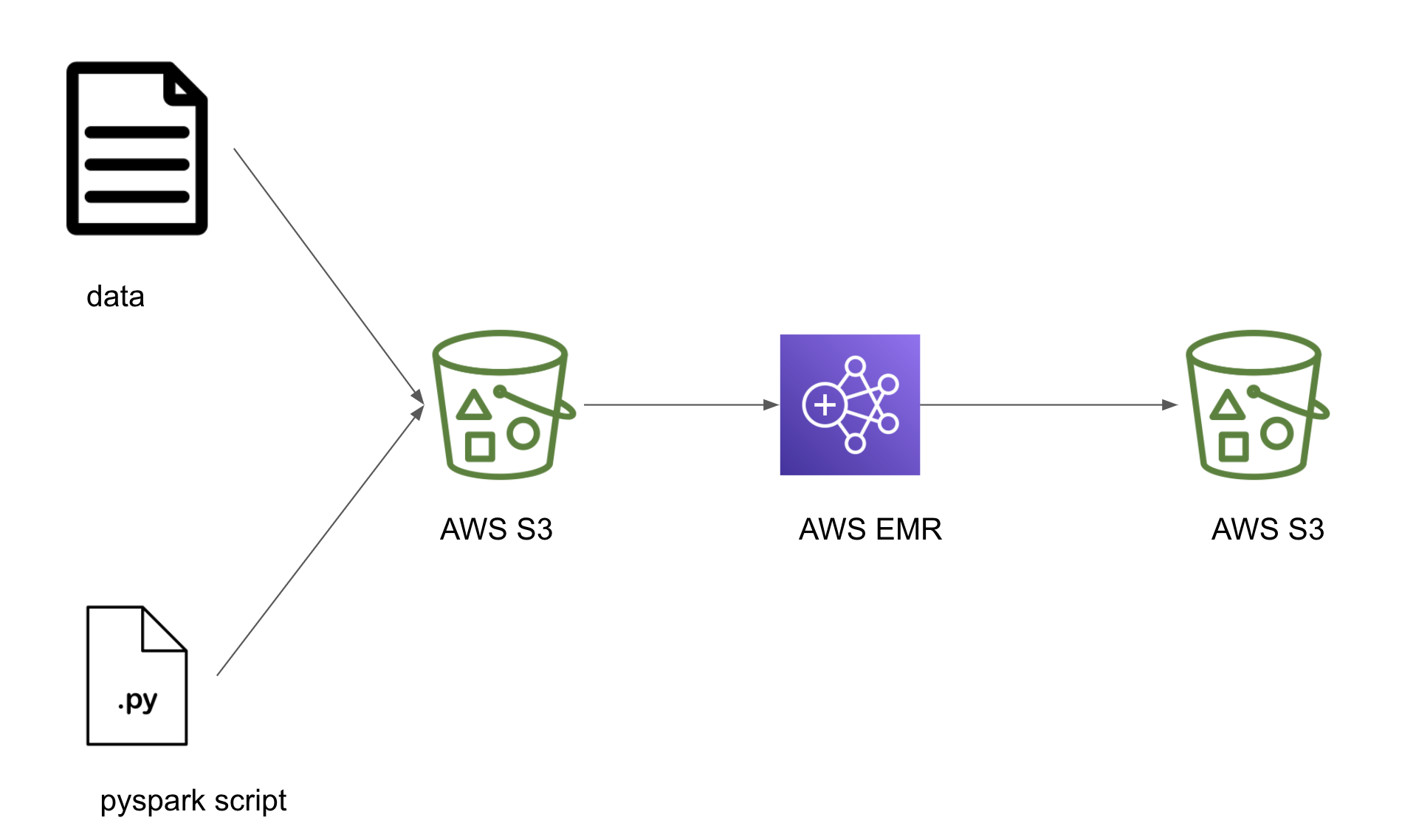

See the License for the # specific language governing permissions and limitations # under the License. You may obtain a copy of the License at # Unless required by applicable law or agreed to in writing, # software distributed under the License is distributed on an # "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY # KIND, either express or implied. The ASF licenses this file # to you under the Apache License, Version 2.0 (the # "License") you may not use this file except in compliance # with the License. See the NOTICE file # distributed with this work for additional information # regarding copyright ownership. Let me know your feedback in the comments below.# Licensed to the Apache Software Foundation (ASF) under one # or more contributor license agreements. I hope this tutorial will help you in adopting airflow easily. Like in this case, I used EMR operators for airflow there by reducing coding efforts drastically. So integrating with other applications is very quick especially when an operator is directly available to use. More and more enterprises are contributing to airflow operators. Since airflow is all Python, I can use it to handle few standard python operations as well rather than completely depending on my codebase to handle it. Like if I have to run similar spark applications for different data sources, I can automate DAG creation and execution process with few lines of code. Airflow gives me this freedom where I can quickly create DAGs and schedule jobs as per requirement.Īutomation to create DAGs is very straightforward. So for me using a scalable orchestration framework with minimum effort is highly desirable. I am a Data Engineer (who is comfortable in Python) and not a member of code deployment team. Since most of the standard features of any schedulers are available in Airflow I would like to share few key reasons why I prefer airflow: I have recently started using airflow and I think I am going to continue to use it for some time now. There are many options which you can use to schedule jobs, from crontab to controlM to other schedulers. INFO line 51: Ending spark application Why I prefer Airflow ? INFO line 48: Writing Parquet File Data - completed INFO line 44: Previewing Parquet File Data INFO line 33: Starting spark application > cat /mnt/var/log/hadoop/steps/s-2Z77NQGX6348N/stdout Get the step-id from the airflow log or EMR console and run the command mentioned below: You can very easily check the log files for step execution in EMR. You can attach custom policy to airflow role to provide required privileges. Make sure that the role AIRFLOW is using must have privileges to add steps in EMR. If the step fails then most likely it is due to permission errors. This should create new STEP in EMR which you can verify on EMR console as well. Refresh the Airflow UI and you shall see new DAG corresponding to this file.Įnable the DAG and trigger it to execute it. Save the file as spark_app_dag.py and put it in the DAG folder of your airflow. # second step to keep track of previous step. # add & edit the args as per your requirement. The very basic command to run this pyspark file with default values should beįrom _add_steps_operator import EmrAddStepsOperatorįrom _step_sensor import EmrStepSensor ("Writing Parquet File Data - completed")ĭf = ("hdfs:///var/data/shoes/") ("Previewing Parquet File Data")ĭf.select("marketplace","customer_id","review_id").show(10,truncate=False)ĭf.limit(100).write.mode("overwrite").parquet("hdfs:///var/data/shoes/")

("Starting spark application")ĭf = ("s3://amazon-reviews-pds/parquet/product_category=Shoes/") Spark = (AppName+"_"+str(dt_string)).getOrCreate() # current time variable to be used for logging purposeĭt_string = datetime.now().strftime("%Y_%m_%d_%H_%M_%S") Handler = logging.StreamHandler(sys.stdout) Formatter = logging.Formatter(' %(levelname)s line %(lineno)d: %(message)s')

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed